Prowl was initially designed as an in house tool to aid engagements where there’s a requirement to capture email addresses from LinkedIn. Recently, it has been further developed to provide the same initial functionality, plus other features such as matching emails against data breaches, identifying current job openings at the target organisation (handy for targeting recruiters and HR when social engineering), subdomain identification and much more. It does so without breaching LinkedIn’s Terms of Service. We are formally releasing Prowl to the public today.

Installation

The first step of the installation is to clone the GitHub repository that contains all of the code required by Prowl.

git clone https://github.com/nettitude/prowl

Once you’ve downloaded the script it’s time to update and install all of the required repositories. This can be done by copying and the pasting the following text into your terminal.

sudo apt-get update sudo apt-get install python-pip python-lxml xvfb sudo pip install dnspython Beautifulsoup4 Gitpython

Using Prowl

A core objective of Prowl is to keep the tool both simple and independent – it doesn’t require any API keys or cookies in order for it to run. The minimal amount of information required includes the company name and the format of the organisation’s email addresses. It should be noted that all optional flags can be selected via -a, excluding the proxy.

As mentioned, the company name needs to be supplied to Prowl. This can be done by adding the -c flag followed but the organisation’s name. If the name has any spaces then it needs to be wrapped with quotation marks, for example “Acme Inc”. Secondary to this, the email format must be supplied via the -e flag, followed by the mark-up and domain.

Examples

- matthewpickford@nettitude.com ->

-e "@nettitude.com" - mpickford@nettitude.com ->

-e "@nettitude.com" - matthewp@nettitude.com ->

-e "- @nettitude.com"

- mp@nettitude.com ->

-e "- @nettitude.com"

Other characters such as hyphens can be added between the mark-up, for example:

- matthew-pickford@nettitude.com ->

-e "- @nettitude.com"

Password Identification

An early feature was the identification of email addresses that are found in publicly known password dumps. This is still possible via the ‘HaveIBeenPwned’ API. However, with the latest version of Prowl, instead of displaying this information via STDOUT, the results are stored within the output accounts file by default.

Finding Jobs

It’s a pretty well known fact that recruiters and HR departments are usually a weak link in terms of clicking links and opening documents. It is after all their job to review CVs, so they’re not entirely to blame. To build a rapport with these heavy clickers it can always be useful to know the currently available jobs within the organisation. Prowl can do this by scraping Indeed jobs without needing to supply any extra information; all that is needed is including the -j flag via the arguments.

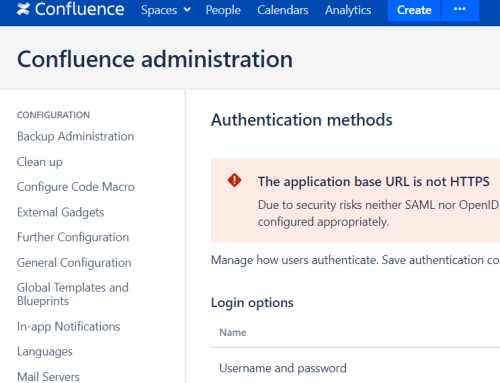

Subdomain Identification

Prowl isn’t only used for social engineering. It can also be useful for password guessing attacks against services such as OWA. The email addresses gathered are only as useful as the services available, so to try and find all IP addresses of the organisation Prowl identifies all registered SSL/TLS certificates for the domain. As seen in the image below, if the hostname doesn’t have an associated IP address then the result is highlighted in red. However, if an IP address is found then it’s highlighted in orange.

Proxy

A requested feature was the ability to pass the Yahoo searches via a proxy. In the latest version of Prowl, this has been implemented. Using the -p flag followed by the proxy address will forward all Yahoo and Bing requests via the supplied server. If the proxy fails to pass the request, Prowl won’t start making the requests direct and will exit instead. An example:

-p https://127.0.0.1:8080

Output

It’s always been a frustration of mine when you run a tool and forget to supply the output flag. Prowl automatically saves all collected data into a company folder within the output directory; each data source is saved into comma delimited CSV files.

Comparison

As with any on-going project it’s always good to compare it to other tools to see how it performs. The latest iteration of Prowl seems to get the most results with Nettitude as the search criteria. This may sway from organisation to organisation, but from our testing this appears to be a consistent trend.

Tool |

Results |

| Prowl | 53 |

| InSpy | 35 |

| Harvester | 9 |

Future

From now on, Prowl will be receiving constant updates, predominantly to adapt to changes made to the scrapping source. A potential idea on the horizon is the ability to push the results from Prowl into a database, whether that be locally or on an internal network. A dream for Prowl would be a way of identifying the email format without the need to provide it as a script argument.

If you have any ideas or feature requests, feel free to make a request via GitHub or drop an email to mpickford at nettitude [.] com.